MotionEmotion

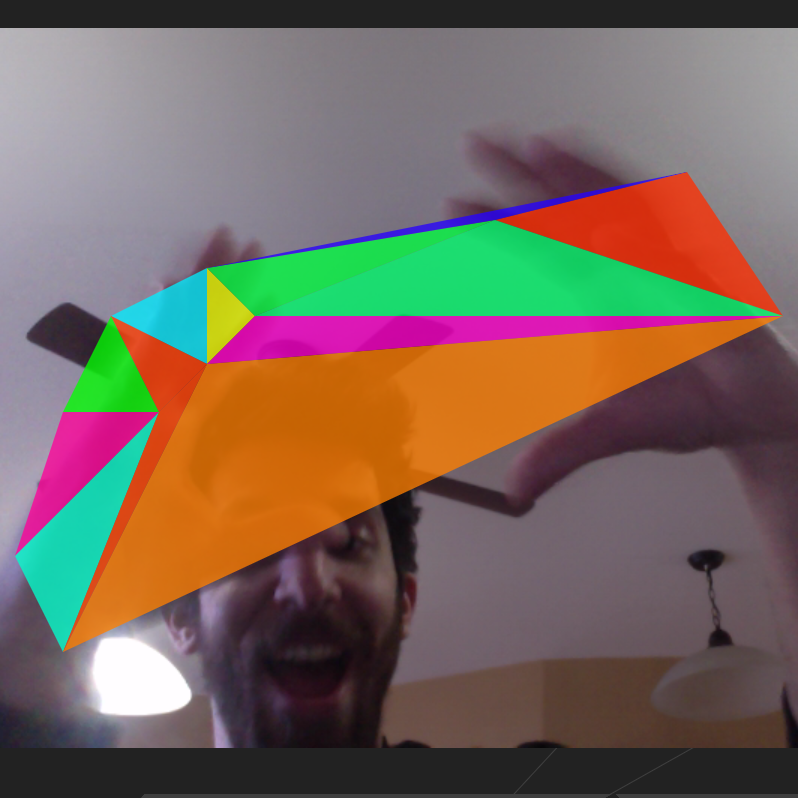

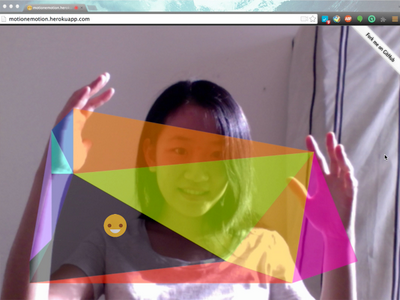

MotionEmotion is an emotion & gesture-based arpeggiator and synthesizer. It uses the webcam to detect points of motion on the screen and tracks the user’s emotion. It draws triangles between the points of motion, and each triangle represents a note in an arpeggio/scale that is determined by the user’s emotion.

I collaborated with Karen Peng who came up with the initial idea. I focused on sonifying the points of motion using the Tone.js library, and implemented initial face tracking / emotion detection using clmtrackr.js.

This project is a Chrome Experiment.

We started working on this project under the name “yayaya” at the Monthly Music Hackathon, Aug 2014 (video | blogged at evolver.fm).

Here’s a short demo of a more recent version with face tracking and arpeggios.