Hereafter Institute

In August 2016, I developed a data-driven music framework for Gabriel Barcia-Colombo‘s Hereafter Institute at Los Angeles County Museum of Art.

Via LACMA:

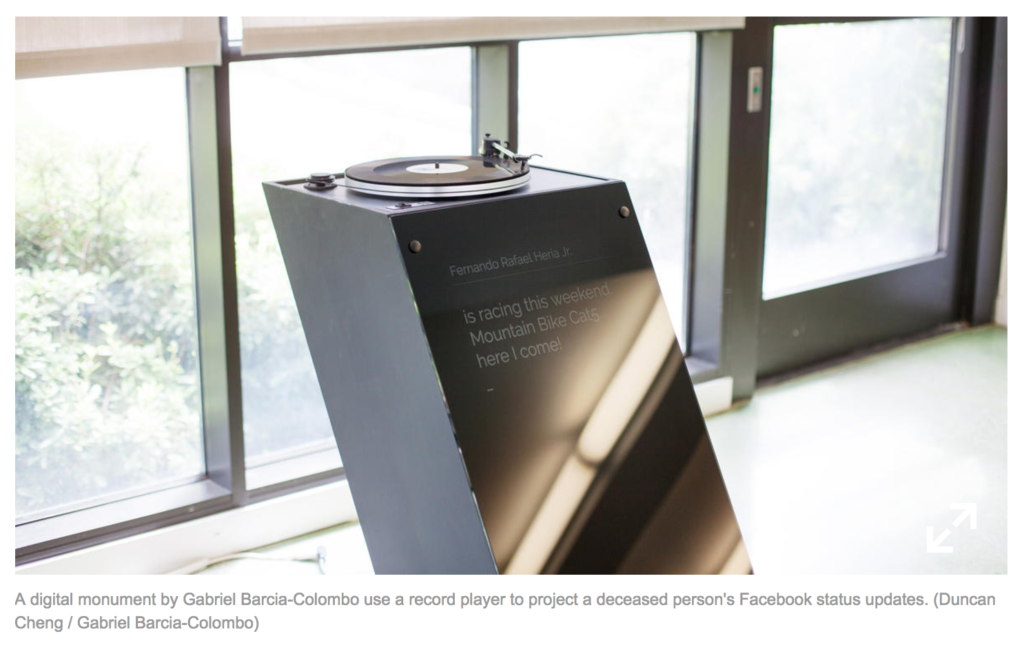

Gabriel Barcia-Colombo’s Hereafter Institute offers participants the chance to plan their digital afterlife and learn about the artist’s technological solutions for the preservation of the digital soul.

As part of the interactive experience, we imagine a future where people’s social media presence is preserved in a vinyl record. Atop a turntable monument built by Ben Light, each record “plays back” an entire Facebook timeline as audio data. Each post’s text displays on the monument during playback, via a frequency-shift keying system by Pedro G.C. Oliveira.

My task was to create a system that transforms this data into music in a meaningful way that somehow captures the essence of each individual Facebook post during playback. I built upon a sentiment analysis script by Sam Lavigne, and worked with the Python music theory library Music21 to output MIDI mapped to each post, and created a session in a Digital Audio Workstation.

Example (using test data):

Some earlier experiments: